Streamr’s Head of Growth, Shiv Malik recently held an AMA on Data Unions for the GAINS Telegram community. Their questions led to an insightful discussion that we’ve condensed into this blog post.

Table of Contents

- What is the project about in a few simple sentences?

- Okay, sounds interesting. Do you have a concrete example you could give us to make it easier to understand?

- Very interesting. What stage is the project/product at? It’s live, right?

- How much can a regular person browsing the internet expect to make for example?

- I assume the data is anonymised, by the way?

- How does Swash compare to Brave?

- Right, so how do you convince these Big Tech companies that are producing these big apps to integrate with Streamr? Does it mean they wouldn’t be able to monetise data as well on their end if it becomes more available through an aggregation of individuals?

- So for big companies (mobile operators in this case), it’s less logistics, handing over the implementation to you, and simply taking a cut?

- Compared to having to make sense of that data themselves (in the past) and selling it themselves

- What is the token use case? How did you make sure it captures the value of the ecosystem you’re building?

- Can the Streamr Network be used to transfer data from IoT devices? Is the network bandwidth sufficient? How is it possible to monetise the received data from a huge number of IoT devices?

- While we’re on the technical side, can you be sure that valuable data is safe and not shared with service providers? Are you using any encryption methods?

- Streamr has three Data Unions; Swash, Tracey and MyDiem. Why does Tracey help fisherfolk in the Philippines monetize their catch data? Do they only work with this country or do they plan to expand?

- Are there plans in the pipeline for Streamr to focus on the consumer-facing products themselves or will the emphasis be on the further development of the underlying engine?

- We all know that Blockchain has many disadvantages as well, so why did Streamr choose blockchain as a combination for its technology? What’s your plan to merge Blockchain with your technologies to make it safer and more convenient for your users?

- How does the Streamr team ensure good data is entered into the blockchain by participants?

- What are the requirements for integrating applications with Data Union? What role does the DATA token play in this case?

- Regarding security and legality, how does Streamr guarantee that the data uploaded by a given user belongs to him and he can monetise and capitalise on it?

What is the project about in a few simple sentences?

At Streamr we are building a real-time network for tomorrow’s data economy. It’s a decentralized, peer-to-peer network which we are hoping will one day replace centralized message brokers like Amazon’s AWS services. On top of that, one of the things I’m most excited about is Data Unions. With Data Unions anyone can join the data economy and start earning money from the data they already produce. Streamr’s Data Union framework provides a really easy way for devs to start building their own data unions and can also be easily integrated into any existing apps.

Okay, sounds interesting. Do you have a concrete example you could give us to make it easier to understand?

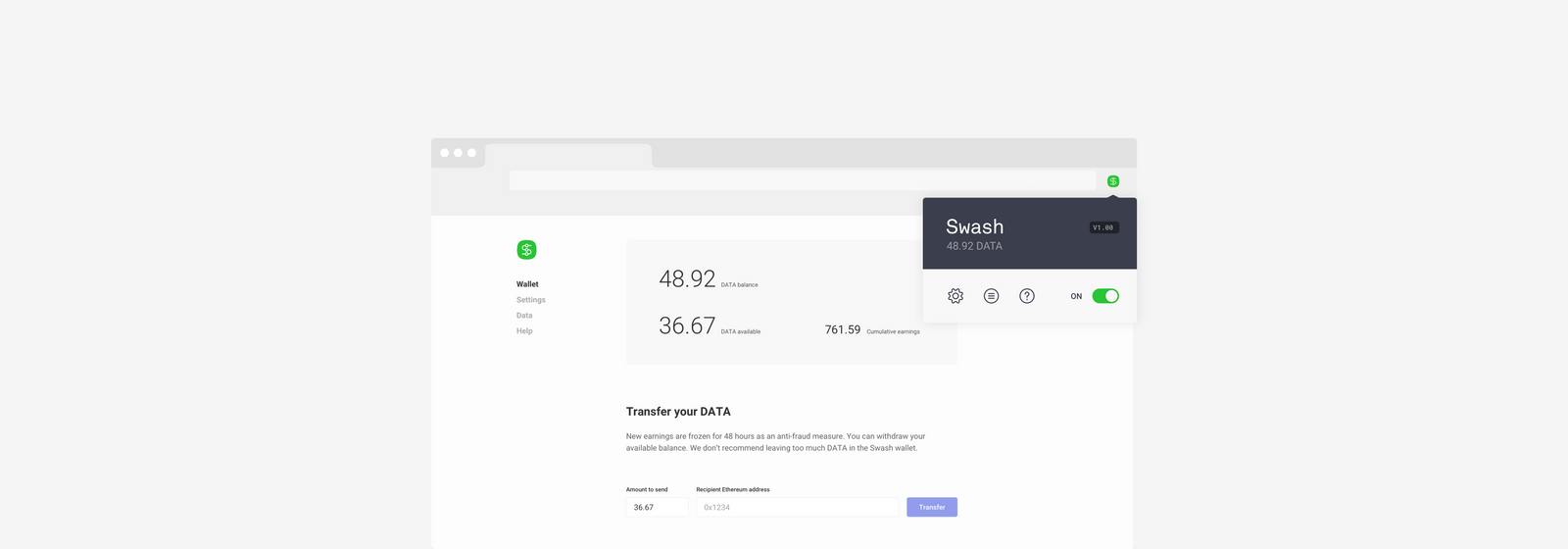

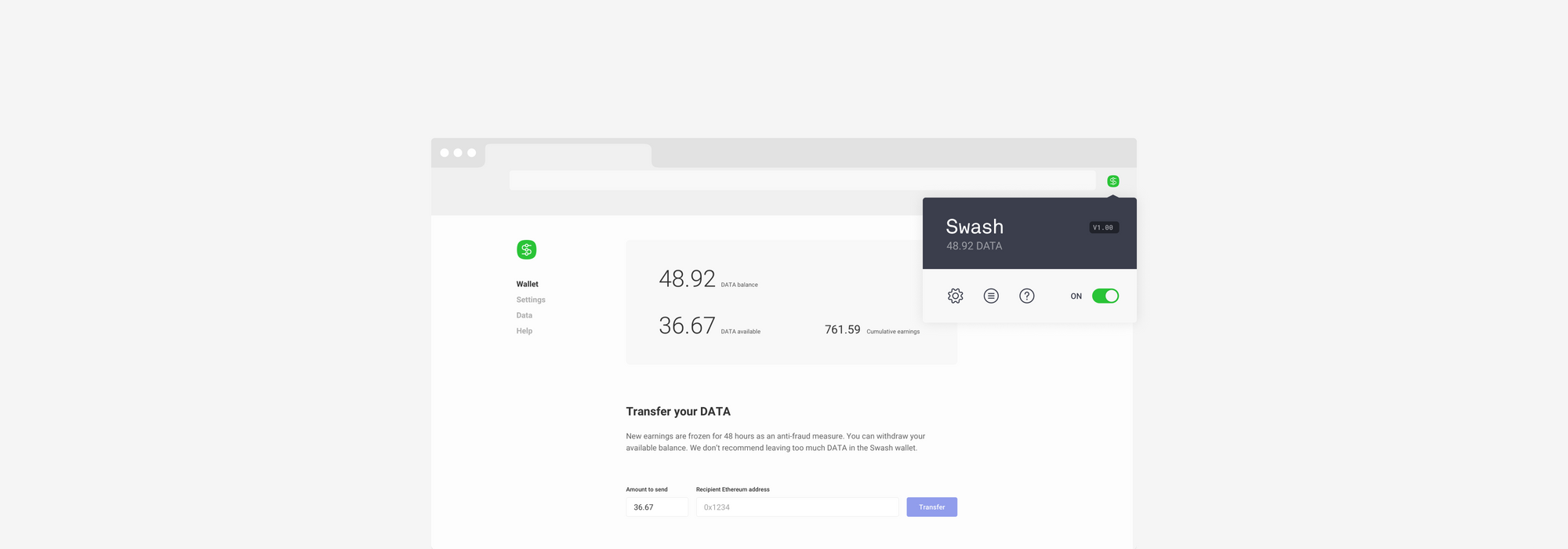

The best example of a Data Union is the first one that has been built out of our stack. It’s called Swash and it’s a browser plugin.

You can download it here in a few clicks.

Basically it helps you monetise the data you already generate (day in day out) as you browse the web. It’s the sort of data that Google already knows about you. But this way, with Swash, you can actually monetise it yourself.

The more people that join the Data Union, the more powerful it becomes and the greater the rewards are for everyone as the data product sells to potential buyers.

Very interesting. What stage is the project/product at? It’s live, right?

Yes. It’s currently live in public beta. And the Data Union framework will be launched in just a few weeks. The Network is on course to be fully decentralized at some point next year.

How much can a regular person browsing the internet expect to make for example?

So that’s a great question. The answer is, no-one quite knows yet. We do know that this sort of data (consumer insights) is worth hundreds of millions and really isn’t available in high quality. So, with a Data Union of a few million people, everyone could be getting 20-50 USD a year. But it’ll take a few years at least to realise that growth. Of course Swash is just one Data Union amongst many possible others (which are now starting to get built out on our platform!)

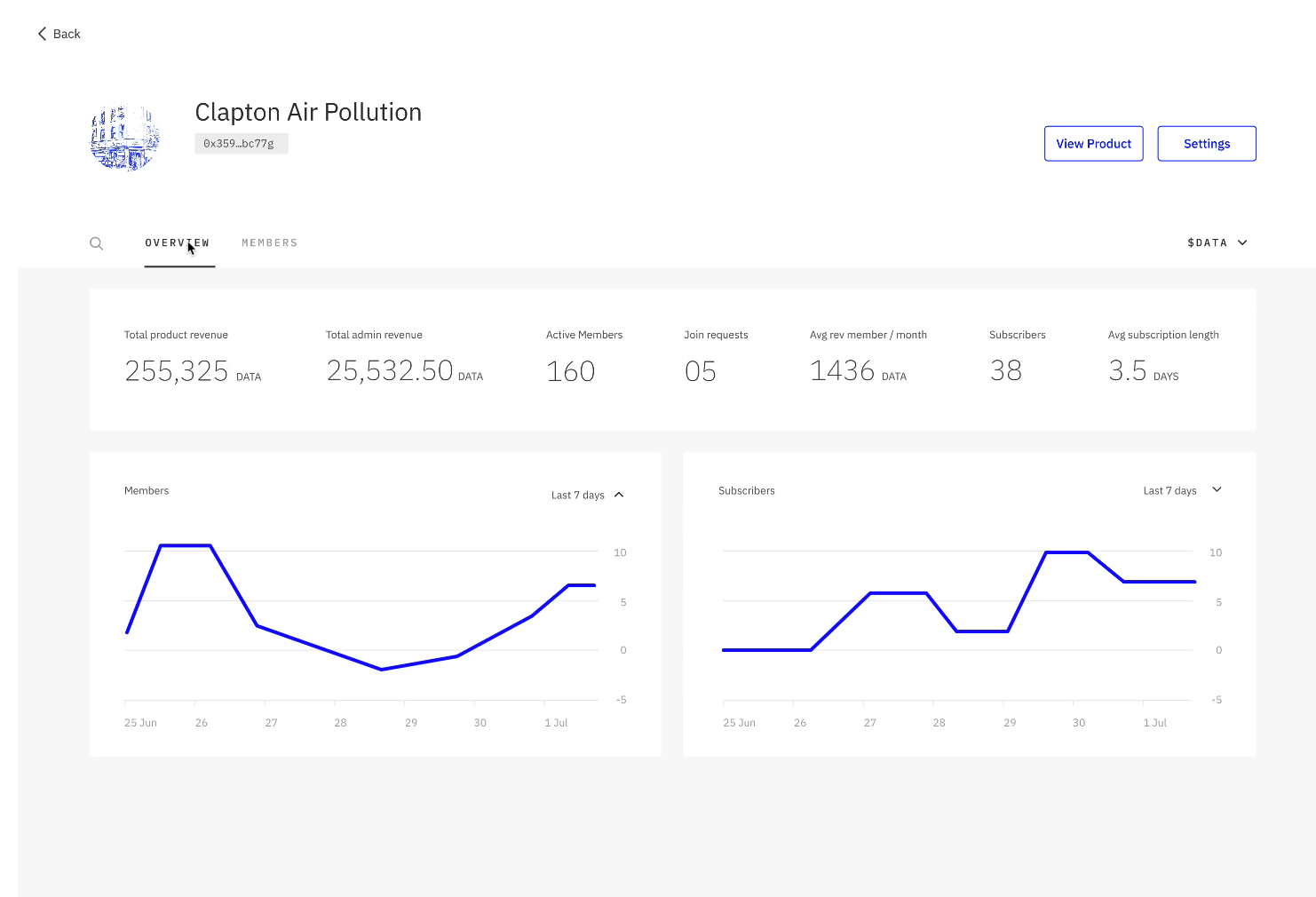

With Swash, they now have 3186 members. They need to get to 50,000 before they become really viable but they are yet to do any marketing. So all that is organic growth.

You can explore these numbers in more detail by downloading an executive summary research commissioned to investigate the market and consumer attitudes towards Data Unions.

I assume the data is anonymised, by the way?

Yes. And there in fact a few privacy protecting tools Swash supplies to its users.

How does Swash compare to Brave?

So Brave offers a consent model where users are rewarded if they opt in to see selected ads targeted to them from their browsing history. They don’t sell your data as such.

Swash can of course be a plugin with Brave and therefore you can make passive income browsing the internet. Whilst also then consenting to advertising if you so want to earn BAT.

Of course, it’s Streamr that is powering Swash. And we’re looking at powering other Data Unions, say for example mobile applications.

The holy grail might be having already existing apps and platforms out there, integrating Data Union tech into their apps so people can consent (or not) to having their data sold. And then getting a cut of that revenue when it does sell.

The other thing to recognise is that the Big Tech companies monopolise data on a vast scale. Data that we of course produce for them. That monopoly is stifling innovation.

Take for example a competitor map app. To effectively compete with Google Maps or Waze, they need millions of users feeding real time data into it.Without that,it’s like Google maps used to be; static and a bit useless.

Right, so how do you convince these Big Tech companies that are producing these big apps to integrate with Streamr? Does it mean they wouldn’t be able to monetise data as well on their end if it becomes more available through an aggregation of individuals?

If a map application does manage to scale to that level then inevitably Google buys them out –that’s what happened with Waze. But if you have a Data Union that bundles together the raw location data of millions of people then any application builder can come along and license that data for their app. This encourages all sorts of innovation and breaks the monopoly.

We’re currently having conversations with Mobile Network operators to see if they want to pilot this new approach to data monetisation. And that’s what’s even more exciting. Just be explicit with users: do you want to sell your data? Okay, if yes, then which data point do you want to sell?

The mobile network operator (like T-mobile for example) can then organise the sale of the data of those who consent and everyone gets a cut.

Streamr, in this example, provides the backend to port and bundle the data, and also the token and payment rail for the payments.

So for big companies (mobile operators in this case), it’s less logistics, handing over the implementation to you, and simply taking a cut?

It’s a vision that we’ll be able to talk more about more concretely in a few weeks time 😁

Compared to having to make sense of that data themselves (in the past) and selling it themselves

Sort of.

We provide the backend to port the data and the template smart contracts to distribute the payments.

They get to focus on finding buyers for the data and ensuring that the data that is being collected from the app is the kind of data that is valuable and useful to the world.

(Through our sister company TX, we also help build out the applications for them and ensure a smooth integration).

The other thing to add is that the reason why this vision is working, is that the current, deeply flawed, data economy is under attack. Not just from privacy laws such as GDPR, but also from Google shutting down cookies, bidstream data being investigated by the FTC (for example) and Apple making changes to iOS 14 to make third party data sharing more explicit for users.

All this means that the only real places for thousands of multinationals to buy the sort of consumer insights they need to ensure good business decisions will be owned by Google/FB etc, or from SDKs or through the Data Union method; overt, rich, consent from the consumer in return for a cut of the earnings.

What is the token use case? How did you make sure it captures the value of the ecosystem you’re building?

The token is used for payments on the Marketplace (such as for Data Union products for example) also for the broker nodes in the Network. (we haven’t talked much about the P2P network but it’s our project’s secret sauce).

The broker nodes will be paid in DATAcoin for providing bandwidth. We are currently working together with BlockScience on our tokeneconomics. We’ve just started the second phase in their consultancy process and will be soon able to share more on the Streamr Network’s tokeneconoimcs.

But if you want to summate the Network in a sentence or two – imagine the Bittorrent network being run by nodes who get paid to do so. Except that instead of passing around static files, it’s real-time data streams.

That of course means it’s really well suited for the IoT economy.

The latest developments on tokenomics were discussed in a recent AMA with Streamr CEO, Henri Pihkala:

Can the Streamr Network be used to transfer data from IoT devices? Is the network bandwidth sufficient? How is it possible to monetise the received data from a huge number of IoT devices?

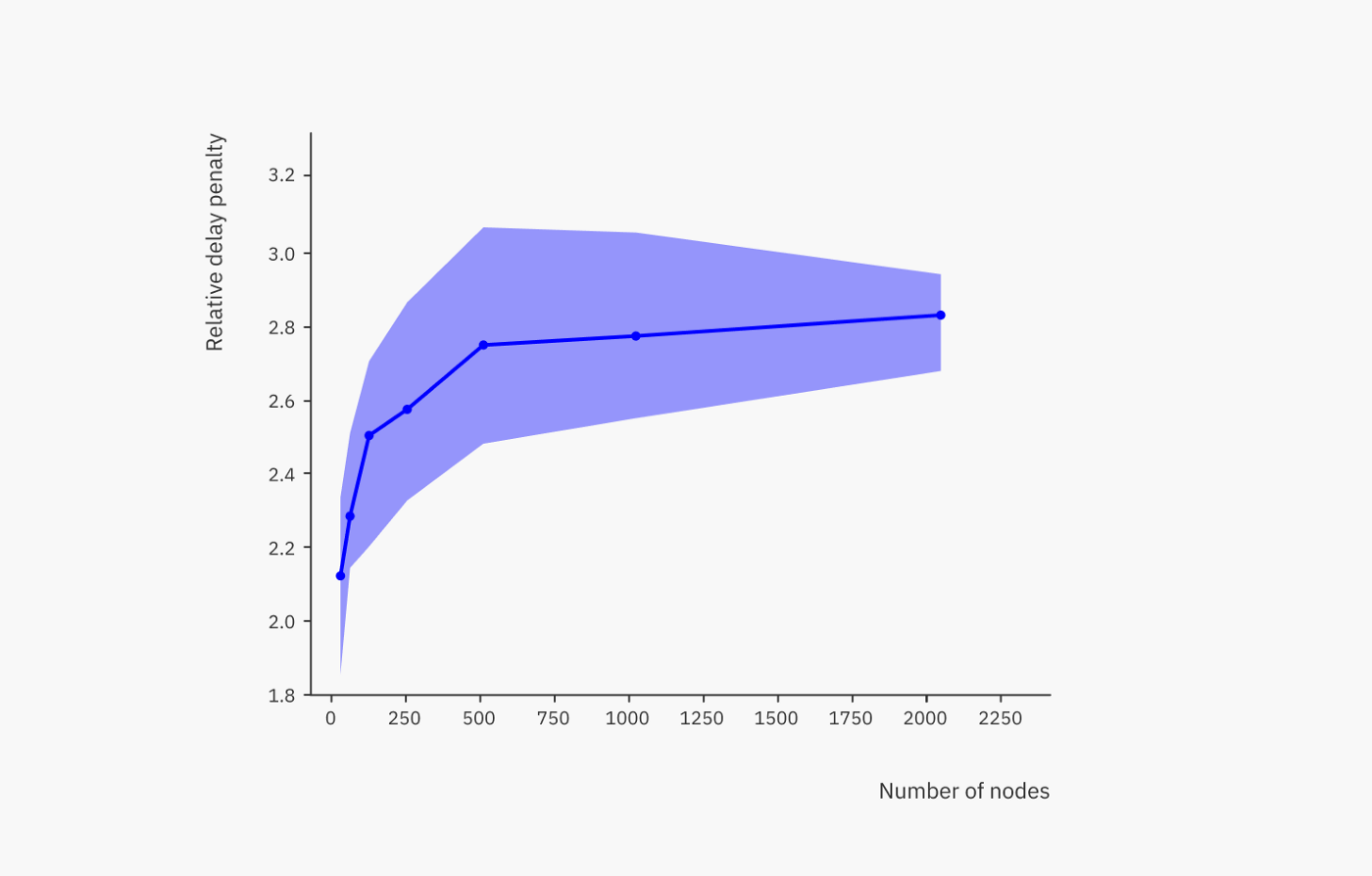

Yes, IoT devices are a perfect use case for the Network. When it comes to the network’s bandwidth and speed, the Streamr team just recently did an extensive research to find out how well the network scales.

The result was that it is on par with centralized solutions. We ran experiments with network sizes between 32 to 2048 nodes and in the largest network of 2048 nodes, 99% of deliveries happened within 362 ms globally.

To put these results in context, PubNub, a centralized message brokering service, promises to deliver messages within 250 ms — and that’s a centralized service! So we’re super happy with those results.

Here’s a link to the paper.

Yes, the messages in the Network are encrypted. Currently all nodes are still run by the Streamr team. This will change in the Brubeck release – our last milestone on the roadmap – when end-to-end encryption is added. This release adds end-to-end encryption and automatic key exchange mechanisms, ensuring that node operators can not access any confidential data.

If, by the way, you want to get very technical the encryption algorithms we are using are: AES (AES-256-CTR) for encryption of data payloads, RSA (PKCS #1) for securely exchanging the AES keys and ECDSA (secp256k1) for data signing (same as Bitcoin and Ethereum).

Streamr has three Data Unions; Swash, Tracey and MyDiem. Why does Tracey help fisherfolk in the Philippines monetize their catch data? Do they only work with this country or do they plan to expand?

So yes, Tracey is one of the first Data Unions on top of the Streamr stack. Currently we are working together with the WWF-Philippines and the UnionBank of the Philippines on doing a first pilot with local fishing communities in the Philippines.

WWF is interested in the catch data to protect wildlife and make sure that no overfishing happens. And at the same time the fisherfolk are incentivised to record their catch data by being able to access micro loans from banks, which in turn helps them make their business more profitable.

So far, we have lots of interest from other places in South East Asia which would like to use Tracey, too. In fact TX have already had explicit interest in building out the use cases in other countries and not just for sea-food tracking, but also for many other agricultural products.

Are there plans in the pipeline for Streamr to focus on the consumer-facing products themselves or will the emphasis be on the further development of the underlying engine?

We’re all about what’s under the hood. We want third party devs to take on the challenge of building the consumer facing apps. We know it would be foolish to try and do it all!

We all know that Blockchain has many disadvantages as well, so why did Streamr choose blockchain as a combination for its technology? What’s your plan to merge Blockchain with your technologies to make it safer and more convenient for your users?

So we’re not a blockchain ourselves – that’s important to note. The P2P network only uses BC tech for the payments. Why on earth, for example, would you want to store every single piece of info on a blockchain. You should only store what you want to store. And that should probably happen off chain.

So we think we got the mix right there.

How does the Streamr team ensure good data is entered into the blockchain by participants?

Another great question there! From the product-buying end, this will be done by reputation. But ensuring the quality of the data as it passes through the network – if that is what you also mean – is all about getting the architecture right. In a decentralized network, that’s not easy as data points in streams have to arrive in the right order. It’s one of the biggest challenges but we think we’re solving it in a really decentralized way.

What are the requirements for integrating applications with Data Union? What role does the DATA token play in this case?

There are no specific requirements as such, just that your application needs to generate some kind of real-time data. Data Union members and administrators are both paid in DATA by data buyers coming from the Streamr marketplace.

Regarding security and legality, how does Streamr guarantee that the data uploaded by a given user belongs to him and he can monetise and capitalise on it?

So that’s a sort of million dollar question for anyone involved in a digital industry. Within our system there are ways of ensuring that but in the end the negotiation of data licensing will still, in many ways be done human to human and via legal licenses rather than smart contracts. at least when it comes to sizeable data products. There are more answers to this, but it’s a long one!