Update: This blog details connection to the centralized and deprecated Streamr Network. Checkout the new blog detailing the connection between the decentralized Streamr Network and Helium: https://blog.helium.com/helium-x-streamr-ea89c4b61a14

Picture this. You’ve deployed coverage on the Helium Network using one of the approved Hotspots. As is common for members of The People’s Network, you quickly realize that deploying sensors and conferring utility on the coverage you’ve created is the next best step. You decide to keep it simple and start capturing temperature and humidity data in your neighborhood. The LHT65 from Dragino is an excellent option for this. You start slow, but before you know it you’ve got an entire fleet of sensors transmitting real-time temperature and humidity data. And to make use of all this, you’ve built a simple application for capturing hyper-local environmental data monitoring in your neighborhood. Life is good. But could it be better? Yes.

Enter Streamr. Streamr is a decentralized platform for real-time data. In short, the Streamr protocol lets you transport, broadcast, and monetize data. There is a massive market for well-organized, specific data – with IoT data arguably being the biggest type. In addition to the base Streamr protocol, they’ve built Data Unions – a higher-level framework that enables large groups of people to productize and find markets for data they produce together. And all transactions are facilitated by $DATA, Streamr’s ERC-20 compatible token that makes settlements and distribution of data streams simple and decentralized.

Recently the team at Streamr put together a simple, powerful demo to showcase the potential of marrying Helium, the world’s largest and fastest-growing LoRaWAN network, with Streamr. What this means is that now you can distribute and monetize that hyper-local environmental data, and potentially endless other data streams you could produce on the Helium Network.

Connecting Data from Helium to Streamr

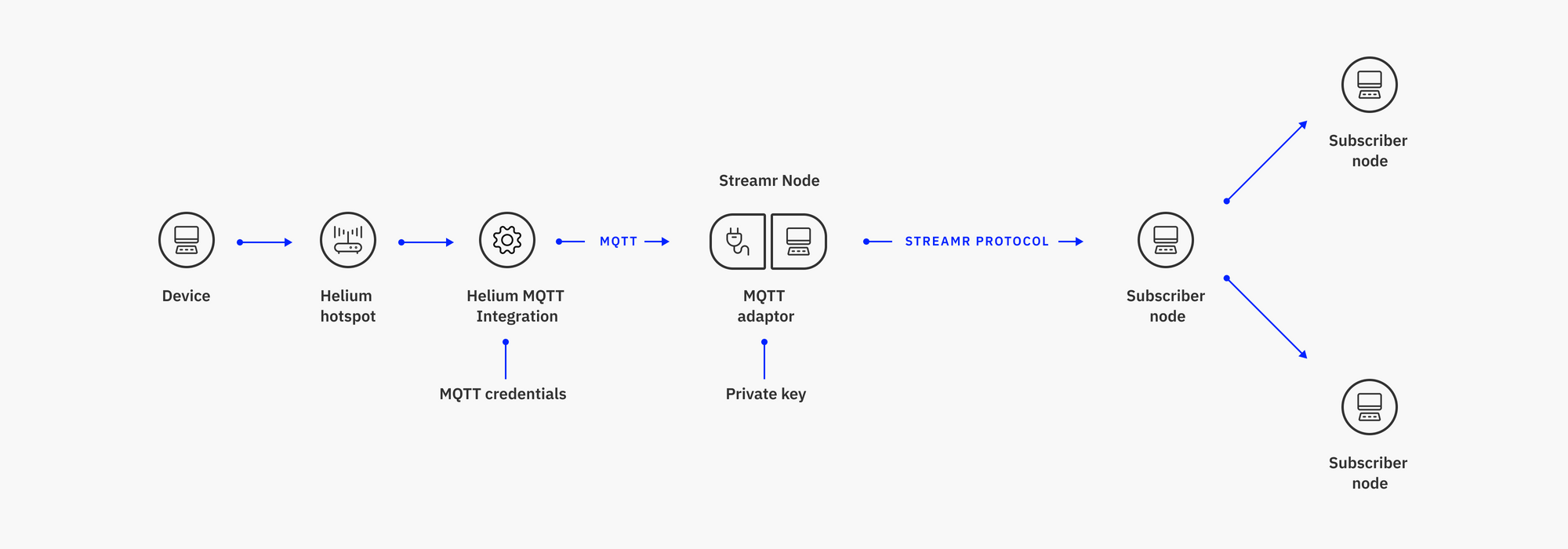

Here’s how it works. In a nutshell, data from sensors deployed to the Helium Network is piped to the Streamr Network via Helium Console’s MQTT integration and the MQTT interface on Streamr nodes.

Immediately after data flows into the Streamr Network, all the options in the ecosystem become available, such as broadcasting the data to applications, packaging it as a data product on the Marketplace, or joining a Data Union to package and sell the data with other, similar data. Let’s take a look at how to configure the integration in practice.

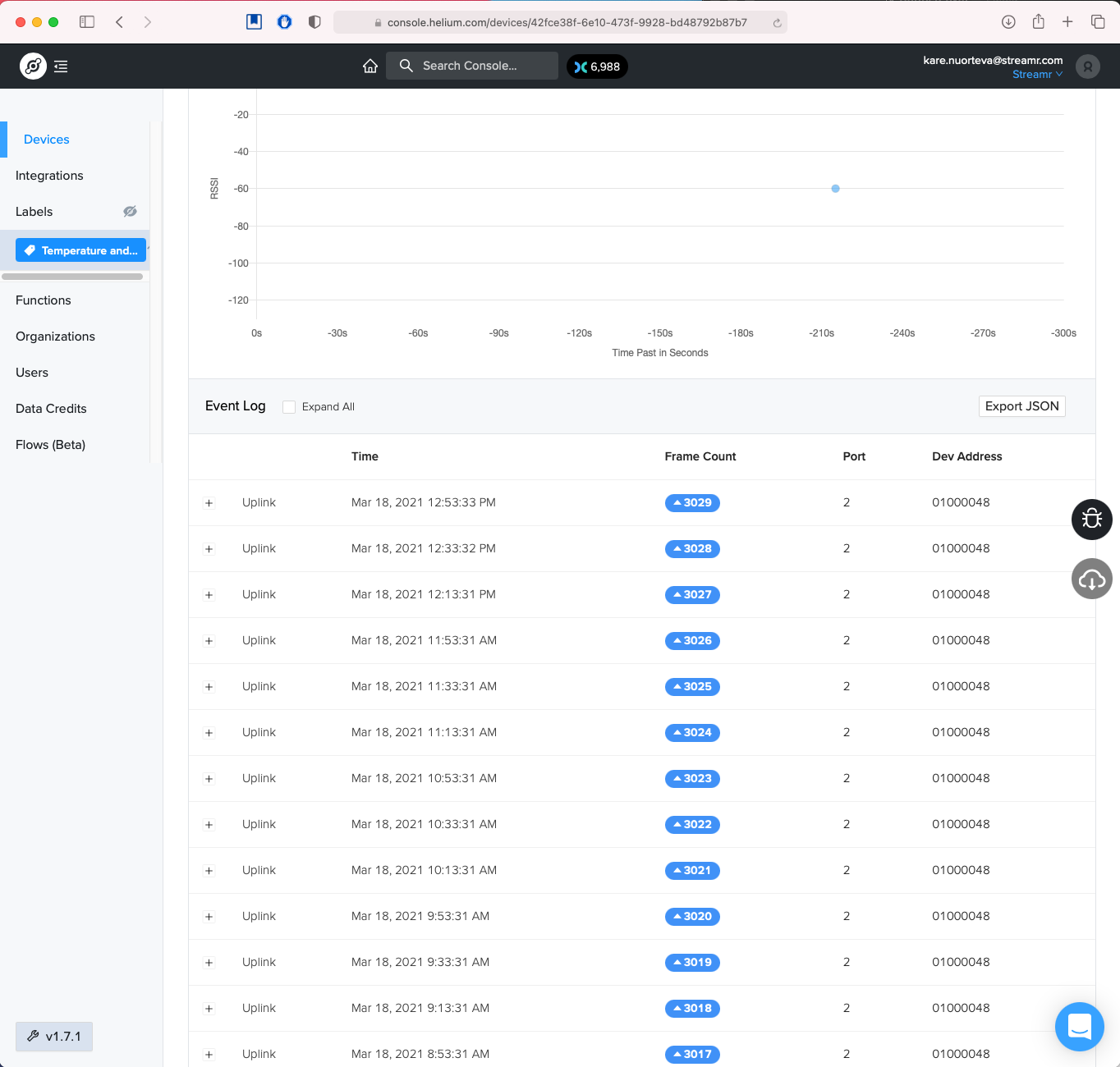

First, you need a sensor within range of the Helium Network. It will be able to join the network and show up in the Helium Console. In the demo, we had an LHT65 LoRaWAN Temperature & Humidity Sensor connected to the Ambitious Ocean Panda hotspot located in Helsinki, Finland.

Here’s what our LHT65 looks like when it’s onboarded to the Network.

And shortly after, here’s what it looks like when data flows in:

Once connected, you’ll also need to run a piece of software to bridge data to/from the Streamr Network. At the moment, you need the helium-mqtt-adapter which will bridge incoming MQTT data to Streamr nodes run by others. Later this year you’ll be able to actually run your own Streamr node instead, which will ship with an MQTT interface also suitable for this setup.

Once you have your device and the adapter up and running, you’re ready to create a stream in Streamr Core. On your way there, you’ll need an Ethereum wallet like MetaMask, as this will provide you with an identity in both the Streamr and Ethereum networks. The stream you create will be the stream that contains your data once the integration is complete. In the stream settings, you can also enable storage to keep a history of the data.

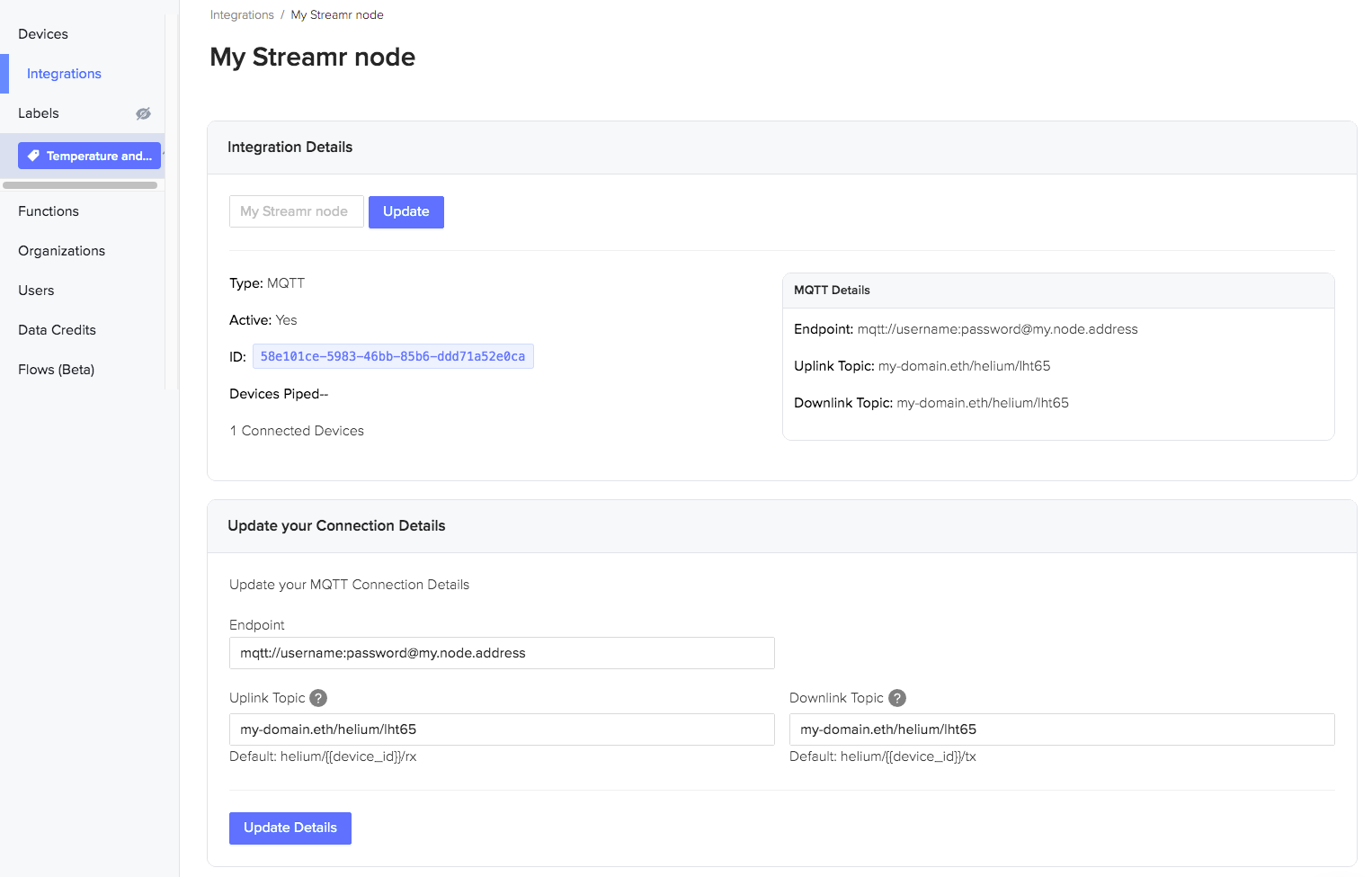

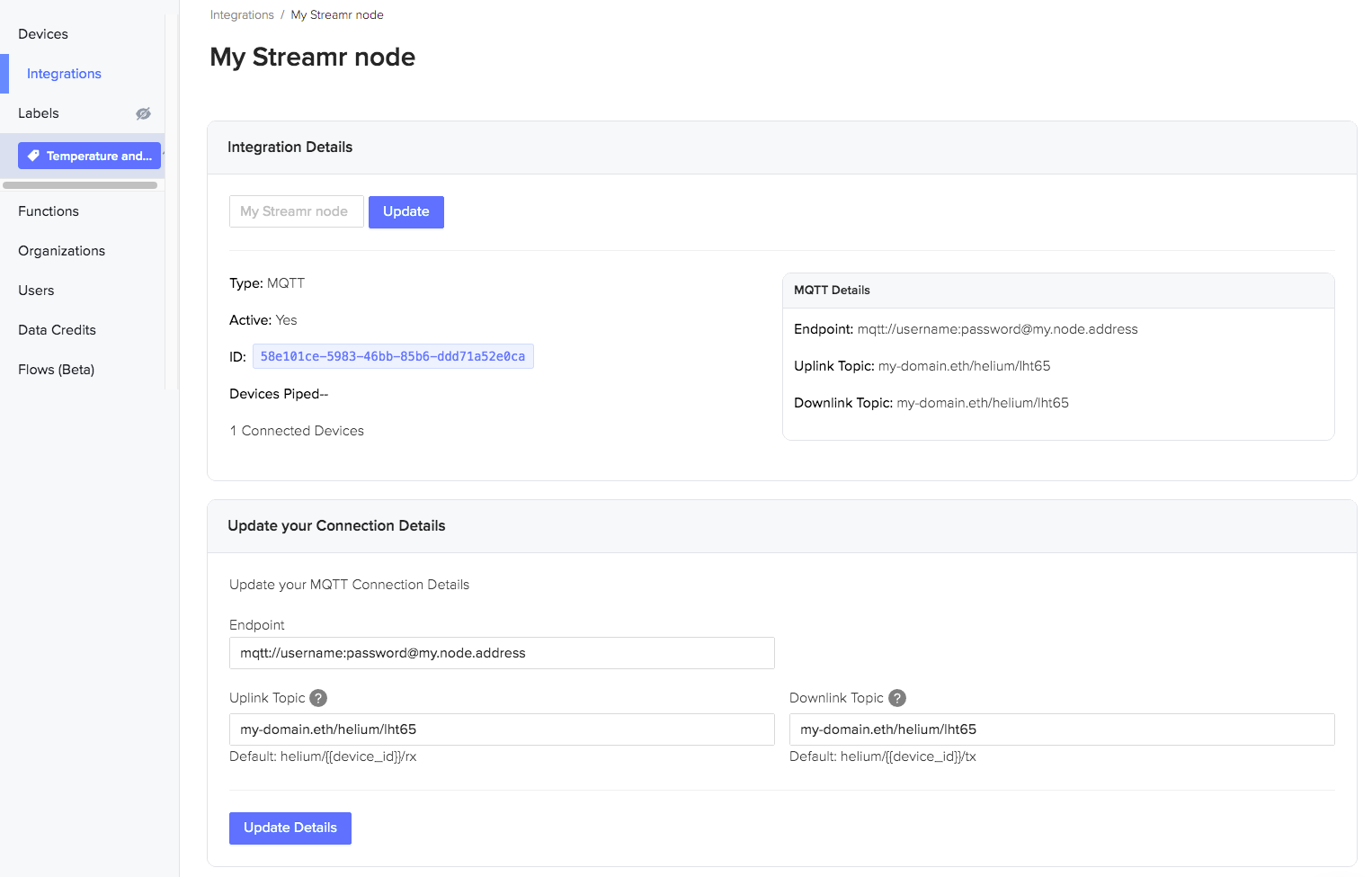

The integration will leverage the off-the-shelf MQTT integration available in the Helium Console, so the next step is to go to Integrations and add MQTT. Configure the integration as follows:

- Integration name: “My Streamr node” (or whatever you want)

- Endpoint: an URL pointing to the IP address where you’re running the helium-mqtt-adapter. For example mqtt://username:password@1.2.3.4. Here, username and password are environment variables you set earlier when configuring the helium-mqtt-adapter to secure it.

- Uplink Topic: copy-paste here the ID of the stream you created in Streamr Core, for example 0x…/helium/lht65.

- Downlink Topic: paste here the same stream ID as above.

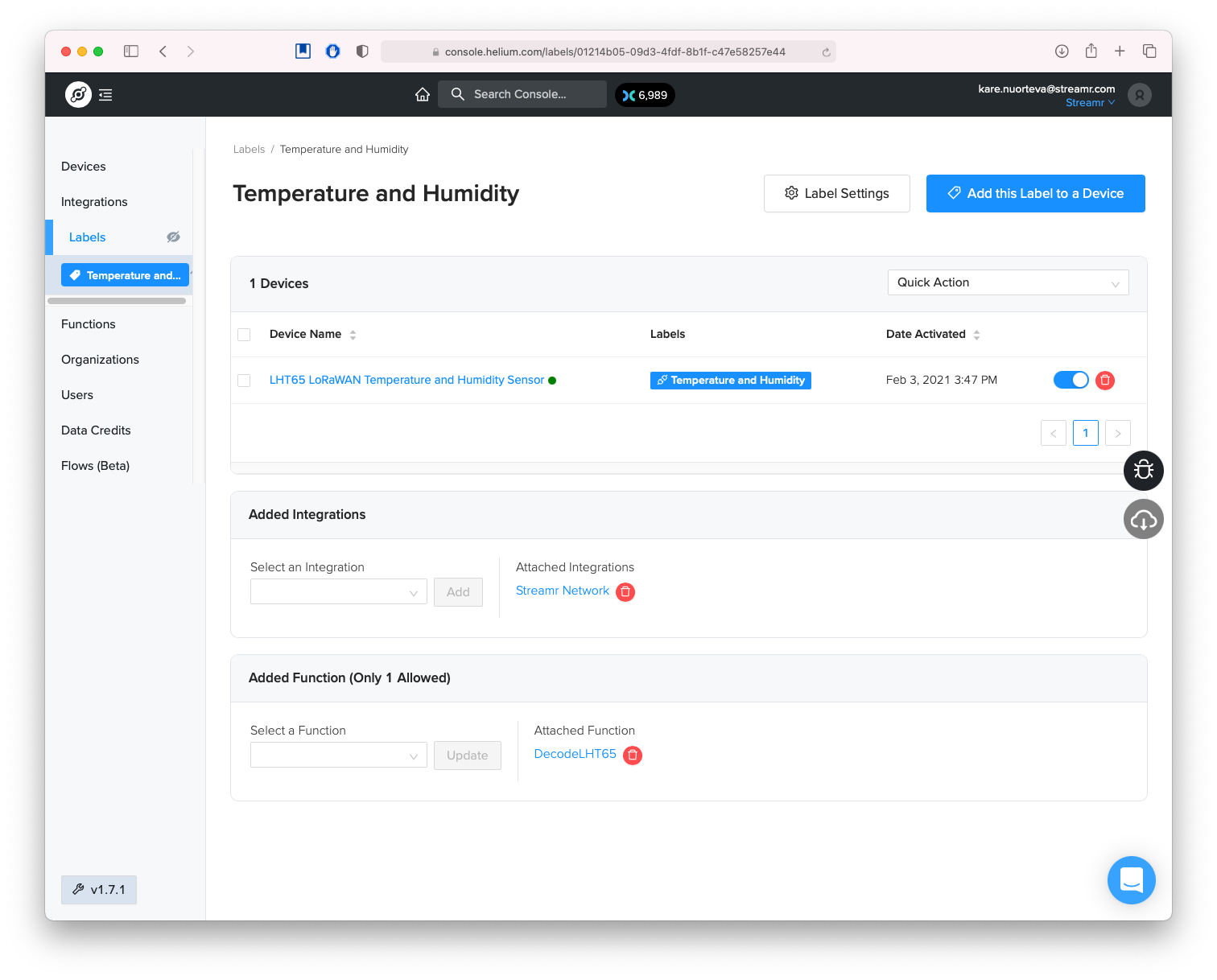

Finally, in the Helium Console, create a Label and add your Device and Integration to it. Here’s an example:

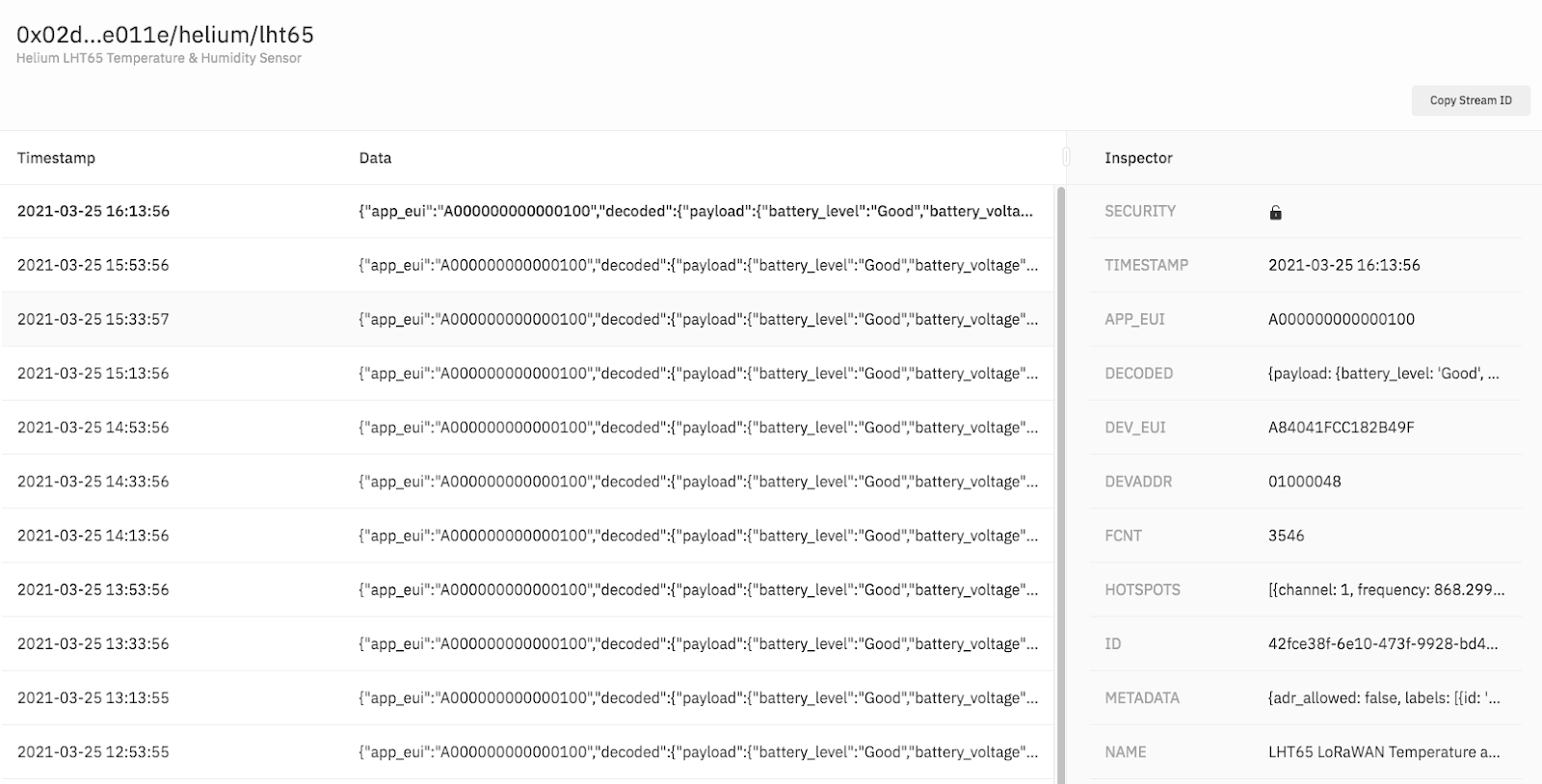

As soon as the sensor sends new measurements, the data points will flow to the Streamr Network, appearing in real-time in the stream inspector, as shown below:

If things are working so far, you’re basically done! One additional recommendation though: the sensor is sending its readings in encoded form by default, so to make your data easier to consume, you probably want to convert those values to human-readable form. To do this, you can add a Function in the Helium Console, and apply it to the Label you created earlier. The exact code of the Function depends on what sensor you’re using, but here’s the code for the LHT65 sensor we used in the demo.

Opportunities in the Streamr ecosystem

Now that your data stream is connected to Streamr, you can use the whole ecosystem to your advantage and make your data go further. You can, for example, connect the data to web apps in real-time using the client library for JS, plug it into Grafana for visualizations, connect to IFTTT for automation using community-built tools, or connect the data to smart contracts with Chainlink and soon API3.

For data monetization, you can wrap your stream(s) into a product and sell it on the Streamr Marketplace. Other people will then be able to pay you a reward of your choosing for continuous access to your streams. If the data from your devices alone is not enough to make a compelling product, you can join (or start!) a Data Union, a framework that allows you to join forces with others producing similar data, and “crowdsell” it together using a revenue sharing model implemented by Data Unions.

By realizing this integration, both Helium and Streamr can provide their users with a fully decentralized and trustless global data infrastructure for a “first-to-last-mile” IoT pipeline. Users gain benefits from the network effects of composable ecosystems, and no longer need to accept vendor lock-in or privacy issues inherent with centralized cloud services in order to connect their data to applications and to data consumers. Instead, users can leverage networks made for, and operated by, the people. They stay in control of the data they’re producing, and they gain the opportunity to participate in the emerging data economy.